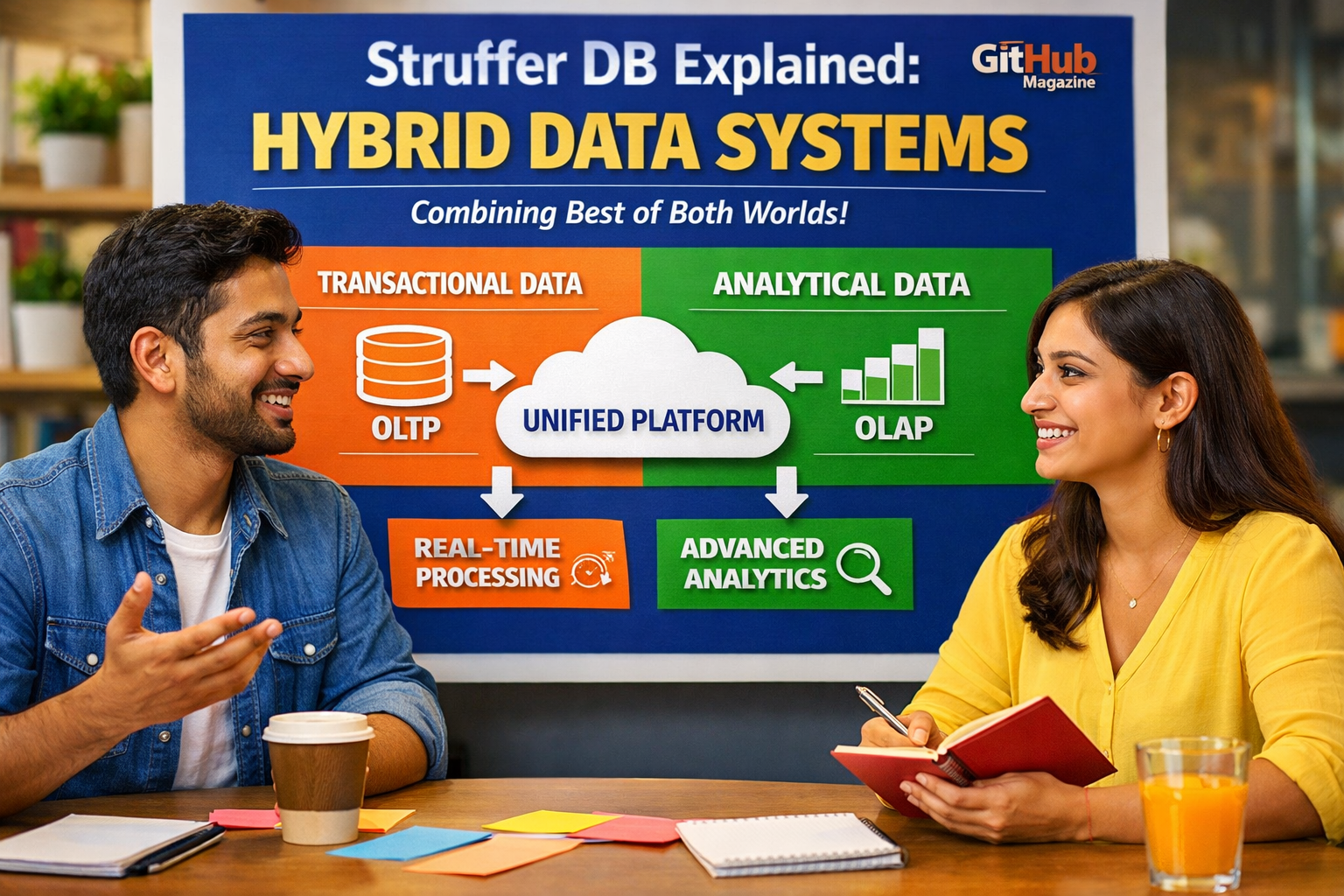

Sruffer DB Explained: Hybrid Data Systems

I remember first encountering the phrase “sruffer db” in a developer discussion that seemed almost cryptic at first glance. Within moments, it became clear that this was not a formal product or standardized database, but rather an evolving idea born out of necessity. In simple terms, “sruffer db” refers to a hybrid database approach that blends buffering capabilities with persistent storage, allowing systems to process real-time data streams without losing reliability. This idea answers a pressing need: how to handle massive, continuous flows of data while still maintaining the integrity and durability that traditional databases provide.

As digital systems increasingly rely on real-time information, the gap between speed and reliability has become more visible. Streaming platforms excel at handling rapid data flows but often lack long-term guarantees, while traditional databases prioritize accuracy but struggle with high-velocity inputs. The “sruffer db” concept attempts to bridge this divide. It introduces a unified architecture where data can be processed instantly and stored securely within the same system.

What makes this idea compelling is not just its technical ambition but its relevance across industries. From financial trading systems to IoT networks and social platforms, the demand for immediate yet reliable data processing is growing rapidly. Whether or not the term becomes widely adopted, the thinking behind it reflects a major shift in how modern data systems are designed.

The Evolution of Databases Toward Hybrid Models

I have always found it fascinating how databases have evolved alongside technological demands. Early relational systems were designed with strict rules and structured queries, prioritizing accuracy and consistency above all else. As the internet expanded, these systems began to show limitations, particularly when faced with massive, distributed workloads. This led to the rise of NoSQL databases, which sacrificed some consistency in exchange for scalability and flexibility.

The next major shift came with streaming technologies, which redefined data as a continuous flow rather than static records. This introduced a new challenge: how to reconcile real-time processing with long-term storage. The emergence of hybrid models reflects an attempt to answer this question, combining the strengths of multiple systems into a cohesive architecture.

| Era | Dominant Model | Key Feature | Limitation |

|---|---|---|---|

| 1970s–1990s | Relational DB | Strong consistency | Limited scalability |

| 2000s | NoSQL DB | Horizontal scaling | Eventual consistency issues |

| 2010s | Streaming Platforms | Real-time processing | Weak persistence |

| 2020s | Hybrid Systems | Unified data flow | Architectural complexity |

As Martin Kleppmann observed, modern systems increasingly rely on multiple specialized components working together. The concept behind “sruffer db” fits directly into this trajectory, representing a step toward deeper integration rather than further fragmentation.

Understanding the Core Concept Behind “Sruffer DB”

When I break down the idea of a “sruffer db,” it becomes clear that its strength lies in combining two traditionally separate functions: buffering and persistence. Buffers allow systems to temporarily hold data in memory for rapid processing, while databases ensure that information is stored reliably over time. The innovation here is merging these capabilities into a single, cohesive system.

This approach is particularly valuable in environments where data arrives continuously and must be processed instantly. Instead of moving data between separate systems, a hybrid model allows for immediate handling and long-term storage within the same framework. This reduces latency and simplifies architecture.

The structure of such systems typically includes in-memory processing layers, persistent storage components, and mechanisms for replication and fault tolerance. By integrating these elements, a “sruffer db” can deliver both speed and reliability.

Michael Stonebraker’s argument that no single system can handle all workloads remains relevant. What makes this concept interesting is that it does not attempt to replace specialization entirely but rather to integrate key functionalities where they matter most.

Why Real-Time Data Demands Are Driving Innovation

I have noticed that the push toward real-time data is no longer limited to niche applications. It is now a fundamental expectation across industries. Whether it is tracking financial transactions, monitoring connected devices, or updating social feeds, the need for immediate insights has become universal.

Traditional databases often struggle with high-frequency updates, introducing delays that can be costly in time-sensitive environments. On the other hand, streaming systems handle speed effectively but may not guarantee long-term data integrity. This creates a tension that hybrid approaches aim to resolve.

| Use Case | Traditional DB | Streaming System | “Sruffer DB” Approach |

|---|---|---|---|

| Financial trading | High latency | Fast but volatile | Fast + durable |

| IoT monitoring | Limited scalability | High throughput | Scalable + persistent |

| Social media feeds | Delayed updates | Real-time flow | Real-time + stored |

The ability to combine speed with durability is what makes this concept so appealing. Organizations that can act on real-time data while ensuring its accuracy gain a significant competitive advantage.

The Role of Distributed Systems in Modern Data Architecture

As I explored this topic further, it became clear that distributed systems play a central role in making hybrid models possible. By distributing data across multiple nodes, these systems achieve scalability and resilience. However, they also introduce complexity, particularly when it comes to maintaining consistency.

The CAP theorem highlights the trade-offs involved, forcing system designers to choose between consistency, availability, and partition tolerance. Hybrid systems attempt to navigate these trade-offs more dynamically, adjusting behavior based on specific needs.

In practice, this means that a system might prioritize speed in one scenario while enforcing strict consistency in another. This flexibility is essential for handling diverse workloads within a single architecture.

The growing reliance on distributed systems reflects a broader shift toward decentralization in computing, where no single node is responsible for all operations.

Industry Implementations That Reflect the Concept

While the term “sruffer db” itself may not be widely recognized, I see its principles reflected in several existing technologies. Apache Kafka, for example, combines streaming capabilities with log-based storage, allowing data to be processed and retained simultaneously. Redis offers in-memory speed while supporting persistence, and Cassandra provides scalable storage across distributed environments.

These systems demonstrate that the industry is بالفعل moving toward integrated solutions. They blur the lines between traditional categories, making it increasingly difficult to distinguish between databases and data-processing platforms.

What stands out is that these technologies are not isolated innovations but part of a broader trend toward convergence. The idea behind “sruffer db” simply provides a conceptual framework for understanding this shift.

Challenges and Limitations of Hybrid Database Models

Despite their advantages, I cannot ignore the challenges associated with hybrid systems. Complexity is perhaps the most significant issue. Combining multiple functionalities into a single system increases the difficulty of design, deployment, and maintenance.

Other challenges include maintaining consistency across distributed nodes, managing operational overhead, and ensuring system reliability under varying conditions. Debugging such systems can also be more complicated due to their interconnected components.

Additionally, not every application requires this level of sophistication. For simpler use cases, traditional databases or streaming systems may still be more efficient and easier to manage.

The key is understanding when a hybrid approach is truly necessary and when it introduces unnecessary complexity.

The Future of Database Design

Looking ahead, I see the principles behind “sruffer db” continuing to influence how data systems are built. As technologies like artificial intelligence and edge computing evolve, the demand for fast, reliable data processing will only increase.

Future systems are likely to become even more integrated, combining multiple functionalities into unified platforms. This may include serverless architectures, autonomous data management, and deeper integration with edge devices.

The concept of a “sruffer db” may eventually evolve into a formal category or fade into broader terminology. Regardless, its underlying ideas are already shaping the direction of database innovation.

What matters most is the shift toward systems that can adapt to changing demands without sacrificing performance or reliability.

Takeaways

- “Sruffer db” represents a hybrid approach combining buffering and persistent storage

- It reflects the evolution of databases toward integrated, real-time systems

- Existing technologies already demonstrate similar principles

- The concept addresses the balance between speed and durability

- Complexity remains a major challenge in implementation

- Distributed systems are essential to making hybrid models work

Conclusion

I find the idea of “sruffer db” particularly intriguing because it captures a moment of transition in technology. It is less about a specific product and more about a way of thinking that challenges traditional boundaries. As data continues to grow in both volume and velocity, the need for systems that can handle real-time processing without sacrificing reliability becomes increasingly important.

This shift is not just technical but conceptual. It forces engineers and organizations to rethink how data is managed, moving away from isolated systems toward integrated solutions. While the term itself may not endure, the ideas it represents are likely to remain influential.

In the years ahead, the success of data systems will depend on their ability to balance speed, scalability, and durability. Whether labeled as “sruffer db” or something else, hybrid architectures will play a central role in achieving that balance.

FAQs

What does “sruffer db” mean?

It refers to a hybrid database concept combining buffering for speed and persistence for long-term storage.

Is it a real product or technology?

No, it is not a formal technology but an emerging idea reflecting trends in database design.

Why is it important?

It addresses the need for systems that handle real-time data while ensuring reliability.

Are there examples of similar systems?

Yes, technologies like Kafka, Redis, and Cassandra demonstrate similar hybrid characteristics.

Who benefits from this approach?

Industries that rely on real-time data, such as finance, IoT, and social media, benefit the most.